A System and Organization Controls (SOC) 2 report is an attestation engagement performed by a licensed Certified Public Accountant (CPA) firm. The report evaluates a service organization’s internal controls relevant to one or more of the Trust Services Criteria (TSC) established by the American Institute of Certified Public Accountants (AICPA): Security (also known as the Common Criteria), Availability, Processing Integrity, Confidentiality, and Privacy. For AI companies, a SOC 2 audit assesses the controls governing the systems used to deliver services, including the entire machine learning lifecycle from data ingestion and model training to deployment and inference.

This isn’t a separate certification for AI. It’s the application of the existing AICPA framework to the unique risks inherent in machine learning systems. It’s about proving you can manage your data and operations with integrity, but through the unique lens of AI risk.

Defining SOC 2 in the World of AI

At its core, a SOC 2 report verifies that an AI company has implemented effective internal controls, measured against the TSCs. For an AI company, this means an auditor is examining how you manage the specific risks that come with building and deploying models. This scrutiny moves beyond typical IT general controls and focuses on the machine learning operations (MLOps) that underpin your service.

Why does this matter for someone pursuing SOC 2? Understanding this definition is critical because it frames the entire audit. Your goal is not to prove your AI is “good” but to demonstrate that the systems and processes surrounding your AI are controlled and secure, as defined by the AICPA’s criteria. This distinction is fundamental to scoping the audit and preparing the correct evidence.

Why This Gets Complicated for AI

An auditor won’t just look at your servers and firewalls. They’ll map the established TSCs directly to the unique failure modes of an AI product. Unlike a standard SaaS platform, you have to prove you can handle risks that many companies never encounter.

- Model Integrity: How do you prove your models haven’t been tampered with or unintentionally degraded? This ties directly to the Processing Integrity criterion, specifically PI1.2, which requires that “The entity implements policies and procedures over system processing to ensure that system processing is complete, valid, accurate, timely, and authorized.”

- Data Poisoning: What controls prevent a malicious actor from feeding your model poisoned training data, causing it to produce biased or incorrect outputs? This is a fundamental security threat that falls squarely under the Security criterion, particularly CC7.1 (related to detecting and mitigating malicious software and unauthorized changes).

- Algorithmic Bias: You need controls to identify, measure, and mitigate unintended bias in your model’s predictions. From a SOC 2 perspective, significant bias can lead to inaccurate outputs, which has direct implications for Processing Integrity (PI1.2) and can also violate Privacy commitments if it leads to discriminatory outcomes based on personal information.

- Training Data Security: If your models are trained on sensitive customer data, you must have robust controls to protect it. This is a classic challenge that involves both the Confidentiality and Security criteria. For instance, CC6.1 requires controls to restrict access to logical and physical assets, which in this case includes your training datasets.

Why does this matter for someone pursuing SOC 2? These unique AI risks must be translated into the language of SOC 2 controls. Failing to map a risk like “data poisoning” to a specific control auditable under the Security TSC means you will have a gap in your audit. A solid foundation in mastering data security compliance is the necessary starting point for building out these AI-specific controls.

A SOC 2 audit for an AI company isn’t about server security alone; it’s about providing evidence that you have effective controls over the entire machine learning lifecycle—from data ingestion and training to model deployment and monitoring.

Ultimately, this all comes down to building a governance framework that an auditor can understand and verify. When you can show them robust controls for model versioning, clear data lineage, and strict access management, you’re not just checking a compliance box. You’re demonstrating a mature security posture that builds real customer trust and helps you close bigger deals, faster.

How to Scope Your SOC 2 Audit for AI Systems

Correctly scoping a SOC 2 audit is the most critical decision an AI company will make in the compliance process. The scope defines the boundary around the systems, people, data, and processes that your auditor will test. For an AI service, this boundary must encompass the entire machine learning lifecycle, from the raw data you ingest to the predictions your model generates.

Why does this matter for someone pursuing SOC 2? An incorrect scope is the most common reason for a failed audit or spiraling costs. Scoping too narrowly (e.g., excluding the model training environment) creates a significant assurance gap that invalidates the report’s value to informed customers. Scoping too broadly (e.g., including internal R&D sandboxes) wastes months and significant budget auditing systems that are not relevant to the service you provide to customers.

Identifying Your Core Service and Boundaries

Before listing systems, you must answer the auditor’s first question: “What specific service are you providing that requires this attestation?” This answer defines the “system” for the purpose of the SOC 2 report and serves as the anchor for the entire audit.

For example, if you sell a fraud detection API, your service boundary is the production environment that accepts customer data, processes it through your model, and returns a risk score. The people who manage that API, the infrastructure it runs on, and the processes for updating the model are all in scope. Everything else is out of scope.

Actionable Guidance: Your SOC 2 report’s system description must clearly define the AI service you deliver. Write it from the customer’s perspective first. Then, work backward to identify every technical component and human process required to operate that service reliably and securely.

Mapping the AI Lifecycle to the Audit Scope

Once your service is defined, you must map your entire AI lifecycle to the audit scope. AI systems have unique components not found in traditional SaaS, and your auditor will expect to see controls for each one.

Your scoping exercise should produce a complete inventory of these components:

- Data Ingestion Pipelines: The systems that acquire data for training and inference, including raw data sources, ETL/ELT jobs, and the data lakes or warehouses where data is stored.

- Model Training Environments: The infrastructure where models are built. This includes cloud environments, container registries, and source code repositories for model development, training, and validation.

- Model Deployment and Inference: The production systems, including the CI/CD pipeline that promotes models to production and the infrastructure that serves predictions via your API.

- Data Storage: All databases and storage buckets containing customer data, training data, model artifacts, and system logs.

- Supporting Technologies: Critical third-party tools like authentication services, monitoring and logging platforms (Datadog, Sentry), and any sub-service organizations like a data labeling service. If it is essential for your service delivery, it’s in scope.

- People and Processes: The ML engineers, DevOps teams, and data scientists who access this infrastructure, along with the formal procedures they follow for change management, incident response, and access reviews.

Making Key Scoping Decisions

Scoping requires making judgment calls. For example, should you include the specific open-source libraries used to build a model? The answer is generally no. The audit focuses on your controls over your environment, not the internal security of a third-party component.

However, you must demonstrate a process to manage vulnerabilities within those libraries (e.g., software composition analysis). That process, which supports CC7.1, is absolutely in scope.

Why does this matter for someone pursuing SOC 2? Every component listed in your system description must have corresponding controls that an auditor can test. This direct line connecting your service, your infrastructure, and your controls is what gives a SOC 2 report its credibility. Getting the scope right ensures the audit provides meaningful assurance to your customers and is a foundational step for a successful outcome.

Mapping AI Risks to the Trust Services Criteria

A SOC 2 auditor does not evaluate the quality of your AI algorithm. They evaluate your internal controls against the language of the Trust Services Criteria (TSC)—Security, Availability, Processing Integrity, Confidentiality, and Privacy. Your responsibility is to translate your unique AI risks into this framework of controls.

Why does this matter for someone pursuing SOC 2? This translation is not a paperwork exercise; it is the core of the audit preparation. It turns abstract concerns like “model theft” into concrete controls an auditor can test against specific AICPA criteria. Failure to perform this mapping correctly leads to audit findings and remediation efforts.

Security: More Than Just Firewalls

The Security criterion (Common Criteria) is the mandatory foundation for every SOC 2 audit. For an AI company, it extends beyond network security to protect the intellectual property of your models and the data that trains them.

Your auditor will look for evidence of controls against AI-specific threats:

- Model Theft: To prevent reverse-engineering of your model via API abuse, you need controls like rate-limiting on inference endpoints and monitoring for anomalous query patterns. This directly maps to CC6.8 (The entity monitors system components and the operation of those components for anomalies that are indicative of malicious acts, natural disasters, and errors affecting the entity’s ability to meet its objectives; anomalous items are analyzed to determine whether they represent security events).

- Training Data Exposure: Your training data is a critical asset. Auditors will test your role-based access controls (RBAC) to ensure only authorized personnel can access it. This is a primary test for CC6.3 (The entity implements logical access security measures to protect its information assets against security events to meet its objectives).

- Data Poisoning: To prevent malicious data from corrupting your model, you need strong input validation on data ingestion pipelines and immutable storage for clean datasets. This helps satisfy CC7.2 (The entity implements controls to prevent or detect and eradicate malicious software).

Availability: Keeping Your AI Online

The Availability criterion assesses whether your customers can rely on your service to be accessible as promised. For an AI company, this centers on the uptime and resilience of your inference APIs and ML infrastructure.

Why does this matter for someone pursuing SOC 2? To satisfy the Availability criterion, you must provide evidence that you have planned for and can mitigate disruptions.

- High-Uptime APIs: Auditors will require evidence of load balancers, auto-scaling groups for model-serving endpoints, and tested failover environments. This directly supports A1.2, which states the entity obtains or develops, and implements, and maintains, and monitors procedures to protect against and mitigate the impact of identified site-level environmental and physical threats.

- Model Disaster Recovery: You need a documented and tested disaster recovery plan. This must include procedures for restoring model artifacts, configurations, and the entire serving infrastructure from a backup.

Processing Integrity: Proving Your Model Does What You Say It Does

Processing Integrity is arguably the most critical and complex TSC for an AI company. It addresses the auditor’s core question: “Is your system’s output complete, valid, accurate, timely, and authorized?”

An auditor will never accept your claim that your model “works.” They demand objective evidence that you have controls to ensure its outputs are consistently reliable and that you can detect and correct issues like performance drift.

Key controls are non-negotiable:

- Model Versioning: You must maintain a clear, auditable history of every deployed model version. Using tools like Git-LFS or DVC for tracking model artifacts provides auditors with concrete evidence for PI1.2 (System Processing Integrity).

- Drift Monitoring: Models degrade over time. You need automated monitoring that tracks key performance metrics (e.g., accuracy, precision) against a baseline and generates alerts when performance deviates. This is critical evidence for PI1.4.

- Output Validation: Implementing sanity checks to ensure model outputs are within expected ranges or formats provides another layer of assurance that the system is processing data correctly under PI1.2.

The table below breaks down how these AI-specific risks map to the SOC 2 framework.

AI Risk Mapping to SOC 2 Trust Services Criteria

| AI Risk | Relevant Trust Service Criterion | Example Control for SOC 2 Evidence |

|---|---|---|

| Model Theft/Extraction | Security (CC6.8) | Screenshots of rate-limiting configurations on inference API gateways; anomaly detection alert logs. |

| Training Data Poisoning | Security (CC7.2) | Code reviews of data ingestion scripts showing input validation; change logs from an immutable data store. |

| Inference API Downtime | Availability (A1.2) | Cloud configuration records showing load balancers and auto-scaling rules; disaster recovery test results. |

| Model Performance Drift | Processing Integrity (PI1.2, PI1.4) | Dashboards from a monitoring tool showing model accuracy tracked over time; alert records for performance degradation. |

| Lack of Model Traceability | Processing Integrity (PI1.2) | Git commit history for model code and artifacts; records from a model registry like MLflow or Weights & Biases. |

| Sensitive Data in Training Sets | Confidentiality / Privacy | Documentation of data anonymization techniques; code showing tokenization or PII removal before training, satisfying C1.2 or P1.1. |

| Unauthorized Model Access | Security (CC6.3) | IAM role definitions restricting access to model registries; access logs showing only authorized personnel accessed models. |

The AICPA has formally integrated AI governance considerations into the TSCs, emphasizing the need for robust AI governance best practices as table stakes for passing a modern SOC 2 audit.

Confidentiality and Privacy: Guarding Sensitive Data

If your AI processes sensitive information, the Confidentiality and Privacy criteria are likely in scope. Confidentiality concerns the protection of business data as promised to customers. Privacy concerns the protection of personally identifiable information (PII) according to your privacy notice and established privacy principles.

- Data Handling During Training: A key control is demonstrating how you protect sensitive data before it is used in a training set. This can be evidenced through documentation and code reviews of data anonymization, pseudonymization, or tokenization processes.

- Preventing Data Leakage: You must have controls to prevent a model from inadvertently disclosing sensitive training data in its responses. This can involve specific fine-tuning methods or output filtering, which an auditor would review.

Mapping your unique AI challenges to the established TSCs is the heart of SOC 2 prep. It turns a generic compliance checklist into a strategic, actionable plan for building a system that’s not just compliant, but genuinely secure and reliable.

Essential Controls and Evidence for Your AI Platform

Passing a SOC 2 audit requires providing objective evidence that your controls are implemented and operating effectively. For an AI company, this means generating tangible proof of governance over the entire machine learning lifecycle.

Why does this matter for someone pursuing SOC 2? An auditor cannot test informal practices. They require system-generated logs, policy documents, and configuration screenshots to verify your control environment. Lacking an auditable trail is a common point of failure for technically proficient AI companies.

Data and Model Lineage

An auditor will test your ability to trace a model’s output back to the specific data and code used to create it. This concept, lineage, is foundational to Processing Integrity (PI1.2) and Security (CC7.1).

Actionable Guidance:

- Data Lineage: Track data from its origin through all transformations into your training datasets. Evidence includes ETL/ELT job logs, data flow diagrams, and database schemas. The auditor needs proof that the data fueling your models is complete and accurate.

- Model Versioning: Version-control all models, datasets, and code. Using tools like Git for code and systems like DVC or Git-LFS for large model artifacts is mandatory. Evidence includes commit histories and version tags that link a deployed model back to its source.

A common audit procedure involves the auditor selecting a deployed model version and requesting its complete training history—the exact dataset, code, and parameters used. Your ability to produce this information promptly is a direct test of your controls.

These controls are critical. Research indicates top-quartile organizations could face over 2,100 data policy incidents per month by 2026 tied to generative AI, with developers uploading proprietary code (42% of incidents) or regulated data (32%) into unsecured AI models.

Access Control and Change Management

Auditors will heavily scrutinize who can access and modify your AI systems. The principle of least privilege must be enforced across your entire MLOps stack, mapping directly to Security criterion CC6.3, which governs logical access.

Your evidence must include:

- IAM Policies: Screenshots and exports of your cloud provider’s Identity and Access Management (IAM) role definitions. These policies must prove that a data scientist cannot unilaterally deploy a model to production or that an ML engineer cannot access raw sensitive data without justification.

- Change Management Tickets: Every model deployment or critical system change must be documented in a system like Jira. These tickets provide a complete audit trail for CC8.1 (Change Management), including documented approvals, testing results, and rollback plans.

Audit-Ready Logging and Monitoring

If a control’s operation cannot be proven with logs, it effectively does not exist for audit purposes. For AI platforms, this extends beyond server access logs to include unique activities within your ML systems, satisfying criteria like CC6.8 (monitoring for anomalies) and A1.2 (monitoring system performance).

To be audit-ready, you must log and monitor:

- Inference Requests: Track every API call to your models, including timestamps and user identifiers. This is your primary defense for detecting model abuse.

- Model Performance: Implement continuous monitoring that tracks model accuracy against a defined baseline. Alerts for performance degradation (“drift”) are crucial evidence for the Processing Integrity criterion.

- Anomalous Behavior: Configure your monitoring systems to detect and alert on anomalous patterns, such as a sudden spike in errors or queries from an unrecognized IP range.

A structured approach is essential for gathering this evidence. Using a detailed SOC 2 evidence collection guide can help you build a comprehensive checklist of required documentation.

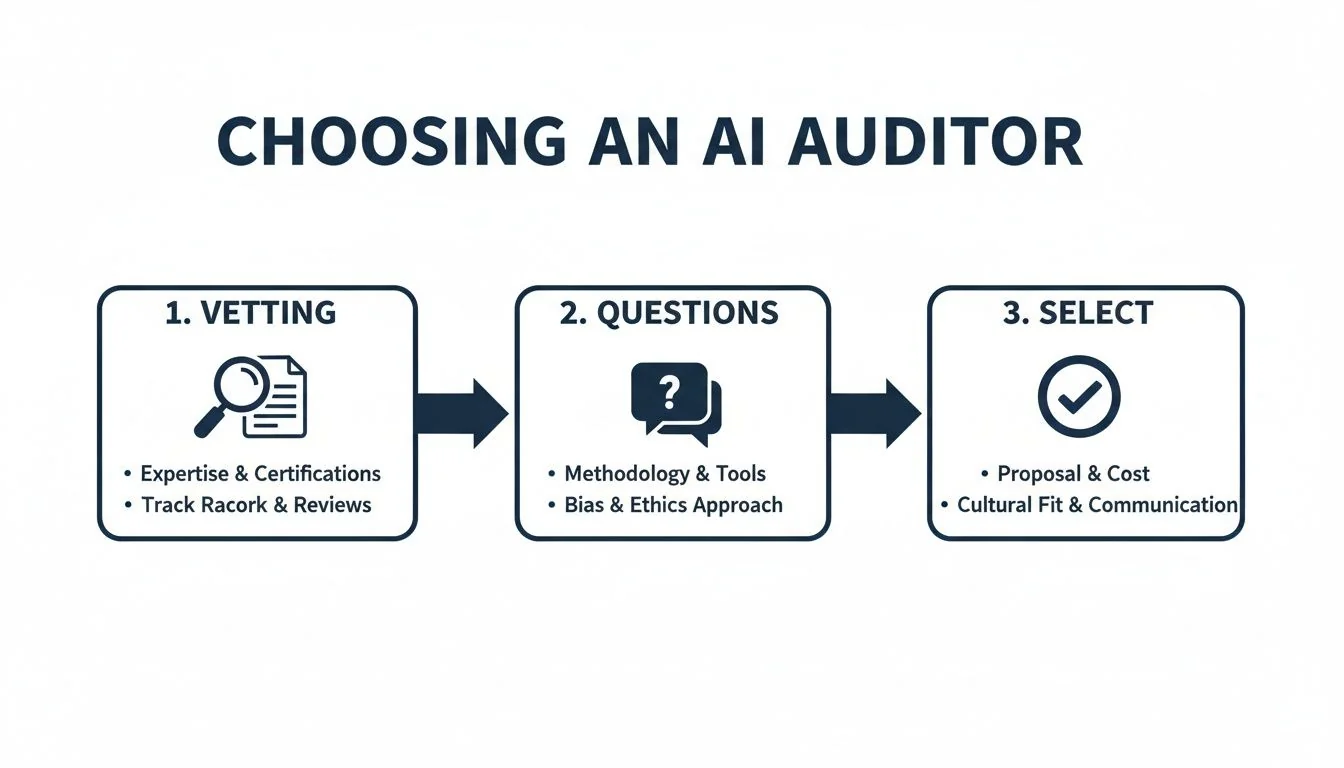

Choosing an Auditor Who Understands AI

Selecting an audit firm is a critical decision in your SOC 2 journey. It is not about finding the lowest cost but finding a partner who understands the unique risks of auditing machine learning systems.

Why does this matter for someone pursuing SOC 2? An auditor lacking specific AI expertise can cause significant friction. They may misinterpret controls, apply inappropriate testing methods to your MLOps stack, or issue findings for irrelevant risks. This leads to wasted time, frustrated engineers, and a report that fails to provide the assurance your enterprise customers require.

The Vetting Process: Questions That Reveal True AI Expertise

Before signing an engagement letter, you must vet potential auditors rigorously. Their answers to technical questions will reveal their experience with AI technologies.

- “Can you describe your experience auditing AI/ML systems similar to ours?” Ask for specifics on model types (e.g., NLP, computer vision) and platforms (AWS SageMaker, custom-built).

- “How do you test controls for model integrity and data bias?” A strong answer will mention testing model versioning systems, reviewing drift monitoring outputs, and examining data pre-processing scripts for bias mitigation techniques.

- “How do you approach auditing controls around training data?” They should discuss testing access controls on data lakes, validating data anonymization processes, and verifying data lineage from source to model.

- “Can you provide redacted reports for other AI or ML companies you have audited?” This provides direct evidence of their experience and reporting style.

A qualified auditor will not just ask for firewall rules. They will ask to see the commit history for your model artifacts, review change management tickets for model deployments, and discuss how you monitor for anomalous inference patterns. Their questions are the best indicator of their expertise.

The market demand for this expertise is growing. By 2026, 53% of organizations plan to pursue AI audits, a figure that rises to 61% for software and AI firms, signaling a major shift in compliance priorities. AI companies selling into the public sector face even stricter scrutiny — see our government contractor compliance guide for the additional requirements that apply. You can find more compliance statistics from recent research.

Boutique Firm vs. Big Four: What’s Right for You?

The choice is often between a technology-focused boutique audit firm and a large, traditional firm (e.g., a Big Four). For most AI startups and mid-market companies, a boutique firm is typically a better fit. They are generally more agile, possess deeper technical expertise in modern cloud and AI stacks, and offer more hands-on guidance. A full breakdown can be found in this guide on how to choose a SOC 2 auditor.

Achieving and Maintaining Continuous SOC 2 Readiness

Obtaining a SOC 2 report is a milestone, but the true objective is maintaining that security posture continuously. This is not a one-time project.

Why does this matter for someone pursuing SOC 2? Continuous SOC 2 readiness means embedding security as a discipline throughout the entire machine learning lifecycle. Treating SOC 2 as an annual “fire drill” is inefficient, expensive, and leaves your company exposed between audits. The goal is to build a security program so robust that compliance becomes a natural byproduct of good governance, not a separate effort.

Your SOC 2 Project Timeline: A Realistic View

A realistic project plan is non-negotiable. For a first-time SOC 2, the project should be broken into distinct phases.

A Typical Timeline for Your First SOC 2 Audit:

- Phase 1: Readiness & Scoping (1-3 Months): Define your audit scope, select the appropriate Trust Services Criteria, and perform a gap analysis to identify control deficiencies.

- Phase 2: Remediation & Implementation (2-6 Months): Implement new controls, write policies, and configure logging and monitoring systems to address the identified gaps.

- Phase 3: Evidence Collection for Type 1 (1 Month): Gather evidence that controls are designed and implemented as of a specific point in time for the Type 1 audit.

- Phase 4: Observation Period for Type 2 (3-12 Months): The period during which the auditor tests the operating effectiveness of your controls over time.

- Phase 5: Type 2 Audit & Report (1-2 Months): The auditor reviews evidence from the observation period and issues the final SOC 2 Type 2 report.

Your choice of auditor significantly impacts this timeline and is one of the most critical early decisions.

Vetting an auditor with specific, technical questions ensures you select a partner who understands AI systems and can guide you through the process effectively.

Avoiding Common Pitfalls Post-Audit

The period immediately following a successful audit is when compliance decay often begins.

A SOC 2 report is a snapshot in time. Enterprise customers expect that you will maintain, and even improve, your security posture between audits.

Common post-audit pitfalls to avoid:

- Failing to Maintain Controls: New infrastructure is deployed without proper security configurations, or temporary access rules are not revoked. This “control drift” is a primary cause of findings in subsequent audits.

- Neglecting Evidence Collection: You stop gathering logs, performing access reviews, or documenting model changes, leading to a scramble for evidence at the start of the next audit cycle.

- Treating It as an Annual Event: Security threats are continuous, and so must be your monitoring and response. Automating controls and evidence collection is the only sustainable path to continuous readiness.

True SOC 2 readiness means embedding compliance into your operational DNA. The controls built for the audit—such as model versioning, change management for deployments, and access reviews for data lakes—are not just for the auditor. They are best practices that make your AI platform more robust, secure, and trustworthy, which in turn accelerates sales and builds lasting customer trust. By maintaining these controls, your organization positions itself for a successful and efficient SOC 2 audit year after year, demonstrating an ongoing commitment to security and governance.

Finding the right audit firm is the first step toward a successful SOC 2 journey. At SOC2Auditors, we replace endless sales calls with a data-driven matching platform. Compare 100+ verified firms by price, timeline, and AI expertise to find the perfect partner for your company, fast. Get your free auditor matches at https://soc2auditors.org.